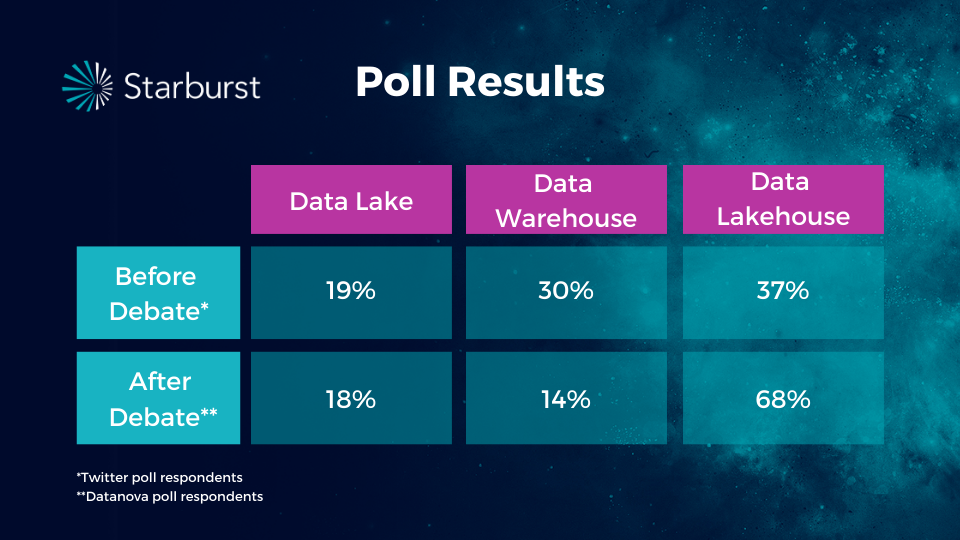

The lakehouse system supports this real-time ingestion, which will only become more popular in the future.What Is a Data Lakehouse and How Can It Transform Higher Education? Streaming support: The data lakehouse is built for business and technology of today and many data sources use real-time streaming directly from devices.This results in more scalability and flexibility. Data lakehouses separate storage and compute, allowing data teams to access the same data storage while use different computing nodes for different applications. More scale: In traditional data warehouses, compute and storage were coupled together, which drove up operational costs.For example, as data is ingested and uploaded, it can ensure that the data meets the defined schema requirements, reducing downstream data quality issues. Better governance: The data lakehouse architecture mitigates the standard governance issues that come with data lakes.It also can support both business intelligence and data visualization workstreams or more complex data science ones. Supports wide variety of workloads: Data lakehouses can address different use cases across the data management lifecycle.

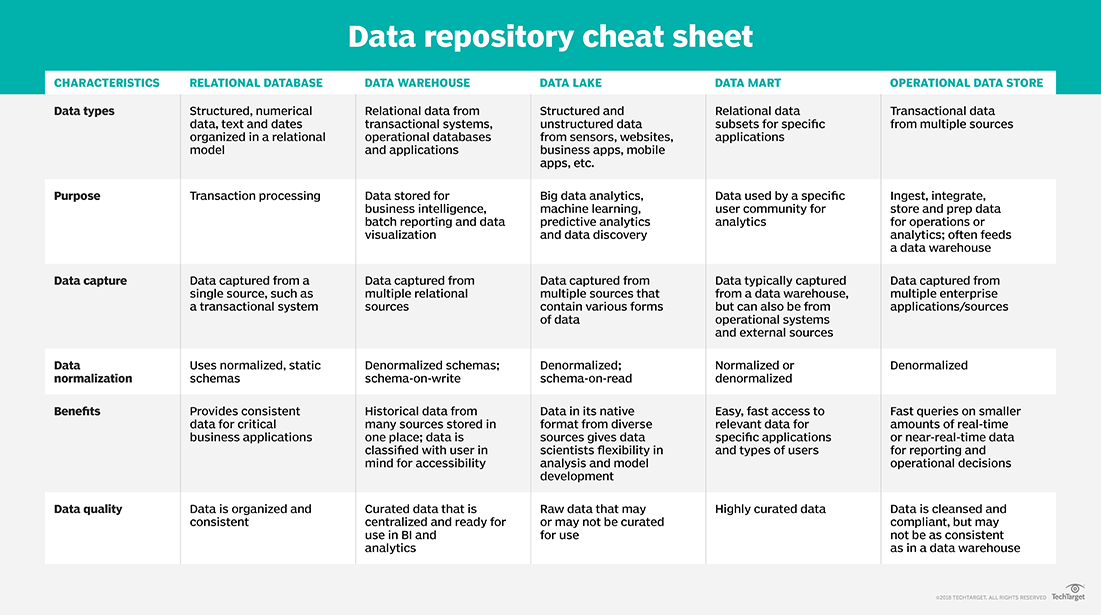

Additionally, the hybrid architecture of a data lakehouse eliminates the need to maintain multiple data storage systems, making it less expensive to operate. Cost-effective: Since data lakehouses capitalize off of the lower costs of cloud object storage, the operational costs of a data lakehouse are comparatively lower than data warehouses.Data lakehouses also simplify data observability by reducing the amount of data moving through the data pipelines into multiple systems. Reduced data redundancy: The single data storage system allows for a streamlined platform to carry out all business data demands.Since data lakehouse was designed to bring together best features of a data warehouse and a data lake, it yields specific key benefits to its users. Durability is after a transaction successfully completes, changes to data persist and are not undone, even in the event of a system failure. This feature is critical in ensuring data consistency as multiple users read and write data simultaneously. As a result, transactions that run concurrently appear to be serialized. Isolation refers to the intermediate state of transaction being invisible to other transactions. Consistency is when data is in a consistent state when a transaction starts and when it ends. ACID stands for atomicity, consistency, isolation, and durability all of which are key properties that define a transaction to ensure data integrity. Atomicity can be defined as all changes to data are performed as if they are a single operation. It typically supports programming languages like Python, R, and high performance SQL.ĭata lakehouses also support ACID transactions on larger data workloads. both structured and unstructured data, meeting the needs of both business intelligence and data science workstreams. It leverages similar data structures from data warehouses and pairs it with the low cost storage and flexibility of data lakes, enabling organizations to store and access big data quickly and more efficiently while also allowing them to mitigate potential data quality issues. Long processing times contribute to data staleness and additional layers of ETL introduce more risk to data quality.Īs previously noted, data lakehouses combine the best features within data warehousing with the most optimal ones within data lakes. However, coordinating these systems to provide reliable data can be costly in both time and resources. Data lakes act as a catch-all system for new data, and data warehouses apply downstream structure to specific data from this system. When this happens, the data lake can be unusable.ĭata lakes and data warehouses are typically used in tandem. Additionally, since data governance is implemented more downstream in these systems, data lakes tend to be more prone to more data silos, which can subsequently evolve into a data swamp. The size and complexity of data lakes can require more technical resources, such as data scientists and data engineers, to navigate the amount of data that it stores.

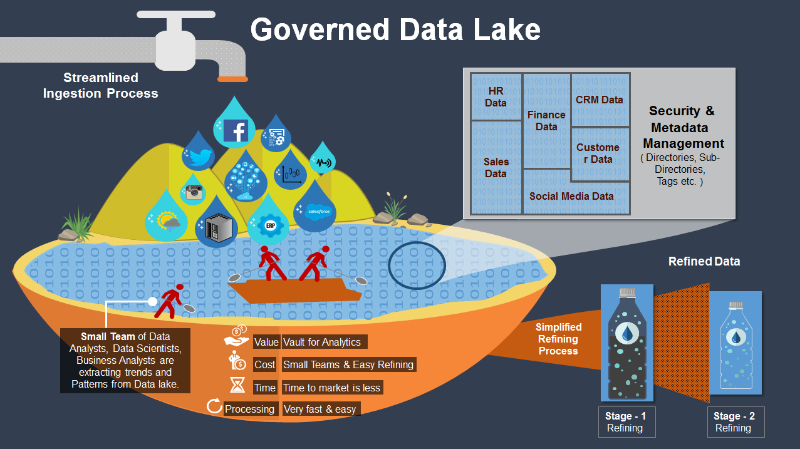

However, data lakes are not without their own set of challenges. Since data producers largely generate unstructured data, this is an important distinction as this also enables more data science and artificial intelligence (AI) projects, which in turn drives more novel insights and better decision-making across an organization. They also house different types of data, such as audio, video, and text. They are known for their low cost and storage flexibility as they lack the predefined schemas of traditional data warehouses. Data lakes are commonly built on big data platforms such as Apache Hadoop.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed